Let’s face it – it’s a tough time to be in the democracy business. America’s democratic institutions and norms are under pressure from hyperpolarization, disruptive technologies, and foreign interference, to name a few things. And we’re not alone: new and established democracies all over the world are facing what Varieties of Democracy has dubbed the “third wave of autocratization”. So when I tell people that I’m the director of evaluation and learning at an organization dedicated to strengthening American democracy, the response I often get is a slightly raised eyebrow and the question “so…how’s that going for you?”

It’s a question intended to prompt a pithy response, I suppose, but I’m increasingly inclined to answer it honestly, and thoroughly. Because the truth is that while assessing impact in any kind of complex social system is hard, it’s particularly difficult when the problems you’re trying to solve are the really big ones and the headwinds you’re facing are especially strong. In these situations, real, meaningful impact is unpredictable, nonlinear, and often something that can only fully understood retrospectively. It’s no wonder that despite robust evaluation and learning practices, many social change organizations still struggle to understand, in real time, whether the work they’re doing is making a difference.

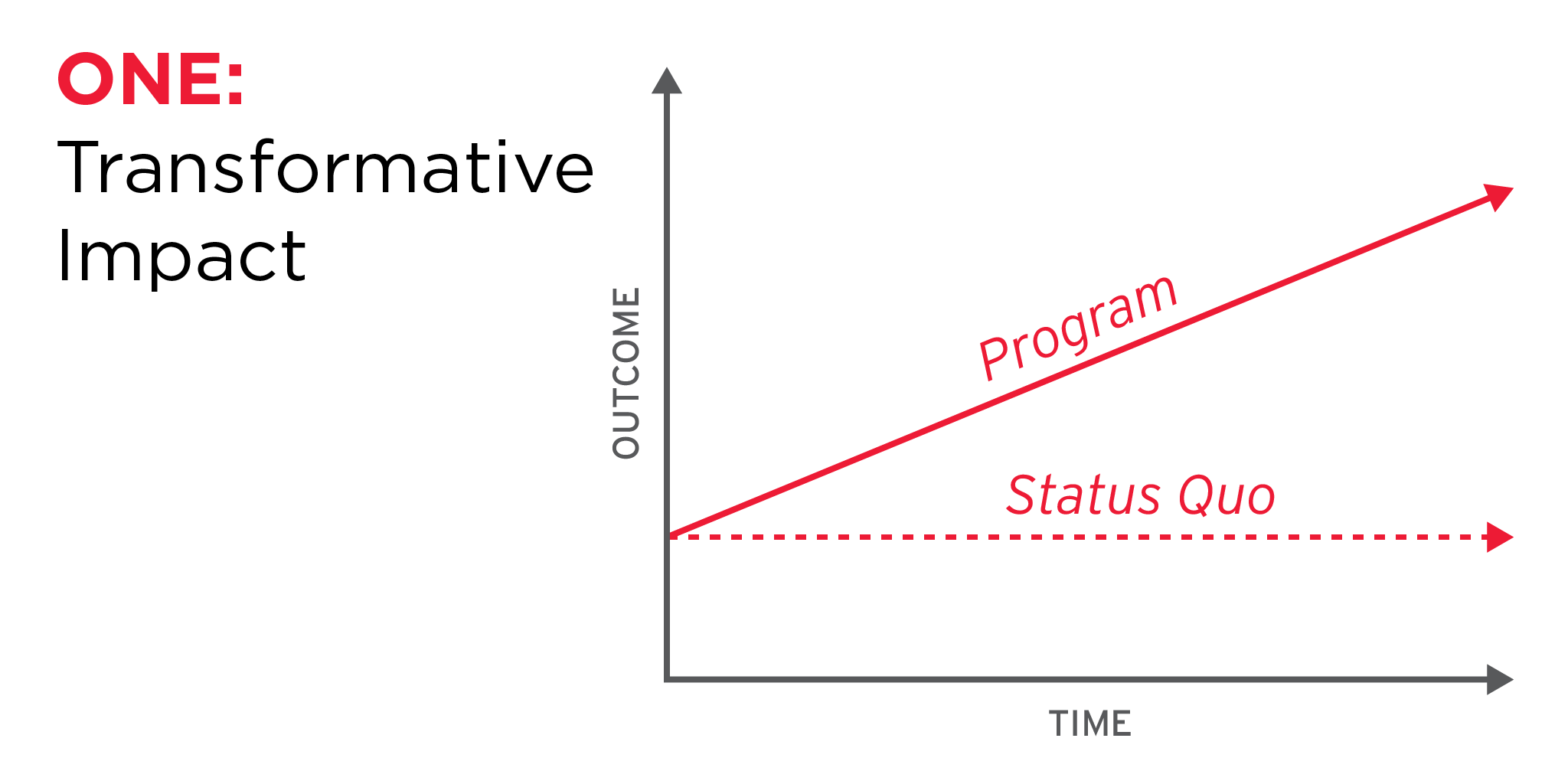

But I’m increasingly convinced that our challenge is not just in measuring the impact that we’re having – it’s in how we think about what impact looks like in the first place. There’s a particular mental model that most people fall into when we talk about impact: we think about what the system will look like as our program progresses over time, compared to what it would look like over the same period without that program. And we default to the expectation that our programs will lead to greater positive increases in desired outcomes compared to the status quo. The rate of change may be incremental, exponential, or something else, but it’s always positive. Unfortunately, this contributes to a widespread assumption in social change work that a program can only be “impactful” if there are measurable increases in expected outcomes.

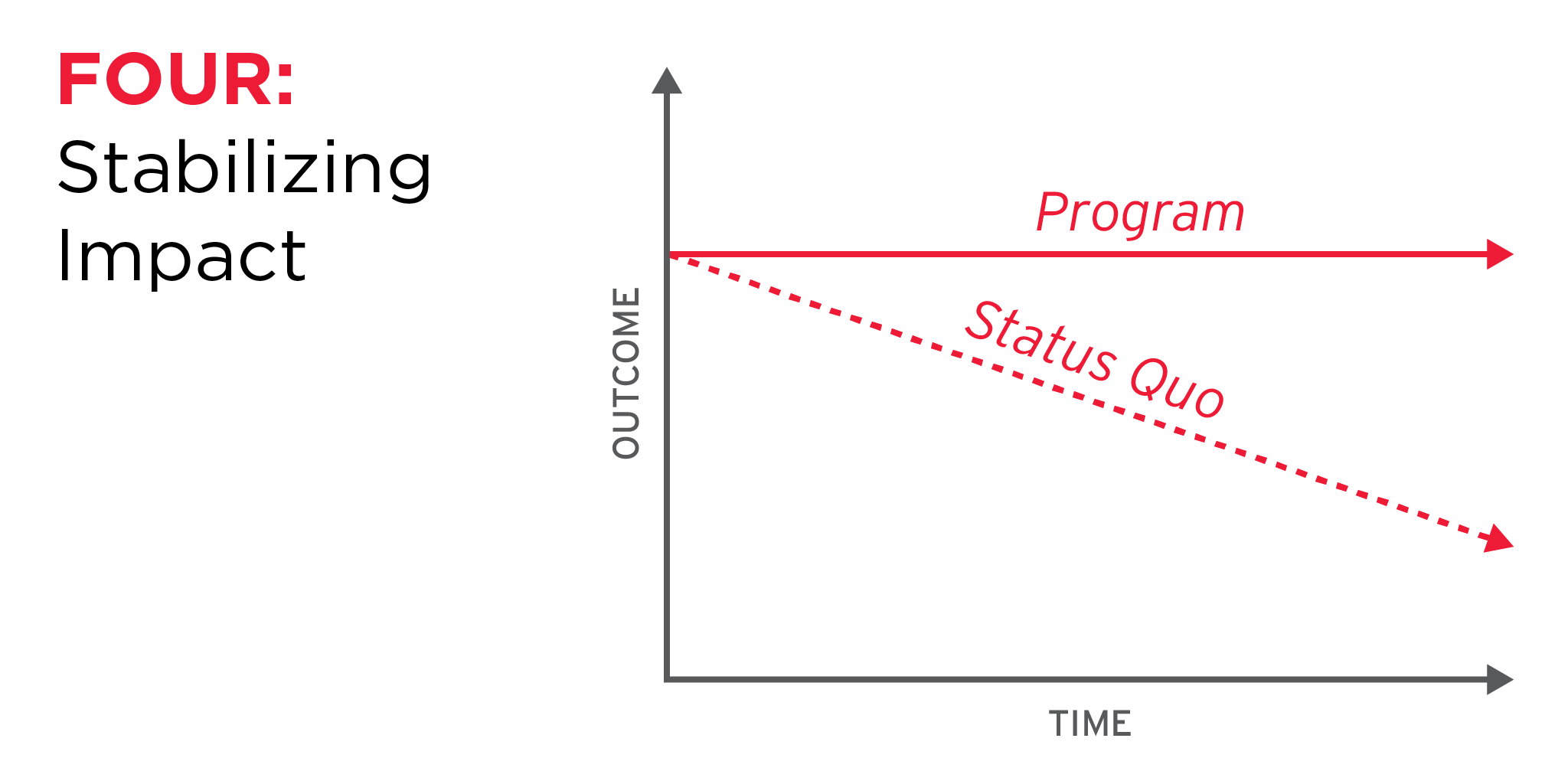

I’ve spoken a lot with my colleagues at Democracy Fund, as well as at other Omidyar Group organizations, about what impact looks like for the work that we do. I’ve recalled evaluations that I’ve led and reviewed other evaluation literature. And what I’ve realized is that impact can manifest in many different ways. Consider, for example, a conversation that I had recently with one of our program teams about an initiative that they had continued to pursue, despite a lack of measurable outcomes in the last year or so. “We never expected this program to fix the system,” they said. “But it’s a finger in the dam. If we don’t do this work, things will keep going downhill.” That’s a perfectly valid strategy, and stabilizing a system in decline can be, in and of itself, an important impact.

So, together with other evaluation and learning experts in other Omidyar Group organizations, I’ve been working on a way to communicate the different ways we think our work will achieve impact, whether that’s transforming a system, stabilizing a system, or something else. With their input, I’ve identified six different “models” of impact, each of which reflect a particular type of status quo, and potential trajectory of change.

Six Models of Impact

Transformative: This is “impact” the way its most commonly thought of. With transformative impact, we expect a positive change in the system over time compared to the static rate of the counterfactual. The rate of change may be gradual/incremental, exponential, or somewhere in between. For example, we might expect a Get Out the Vote initiative to be transformative, with a positive change on voter turnout over the course of the project.

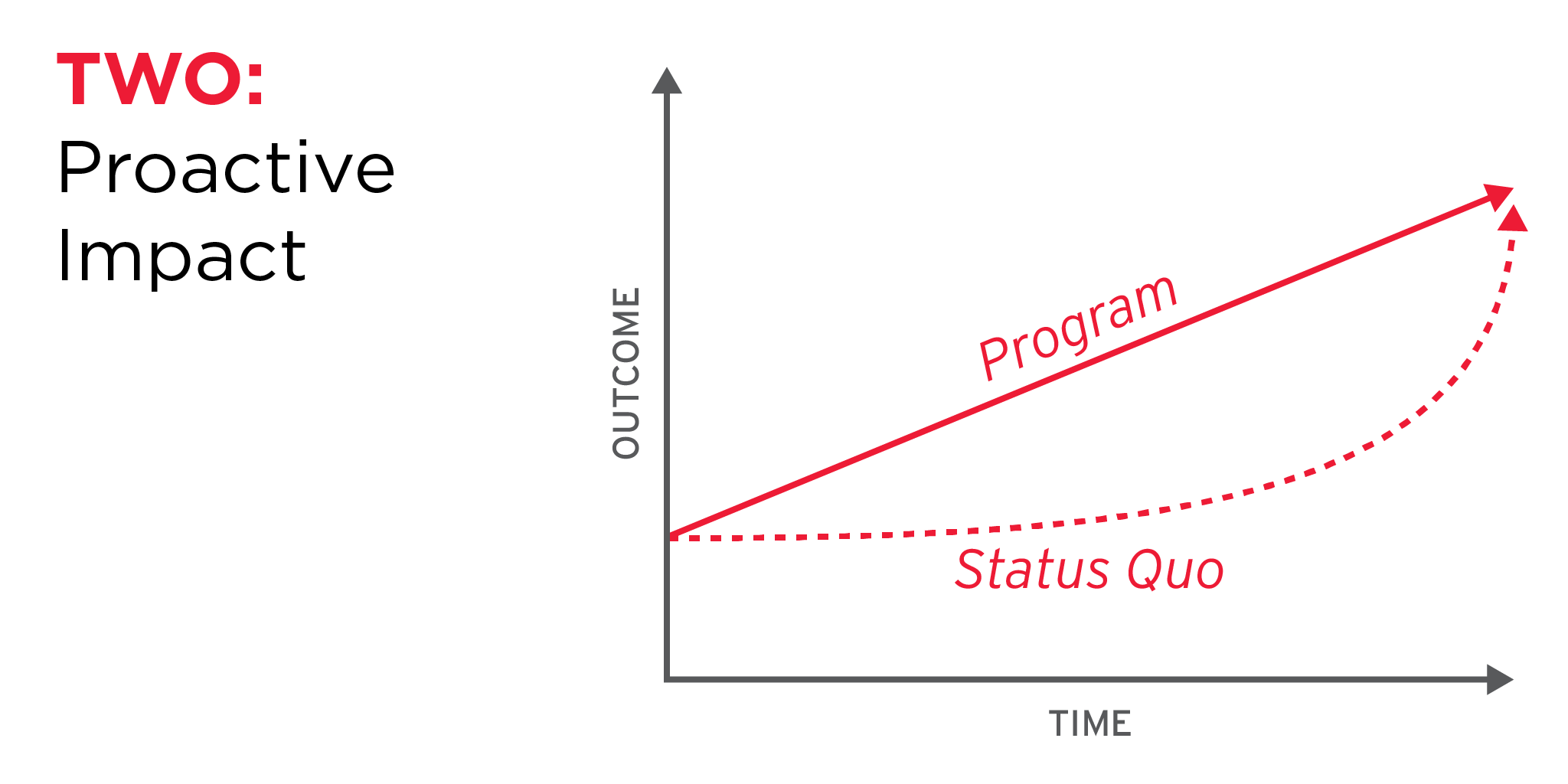

Proactive: Some systems may already be moving in a positive direction, but an intervention can help accelerate that change. In this case, the ultimate change in outcomes is the same, but the accelerated pace and steeper rate of change is meaningful. We might facilitate these programs if the impact then allows us to pursue further opportunities that we are otherwise waiting to implement. For example, a public awareness campaign can help shift public attitudes toward a particular issue more quickly than they might otherwise have done.

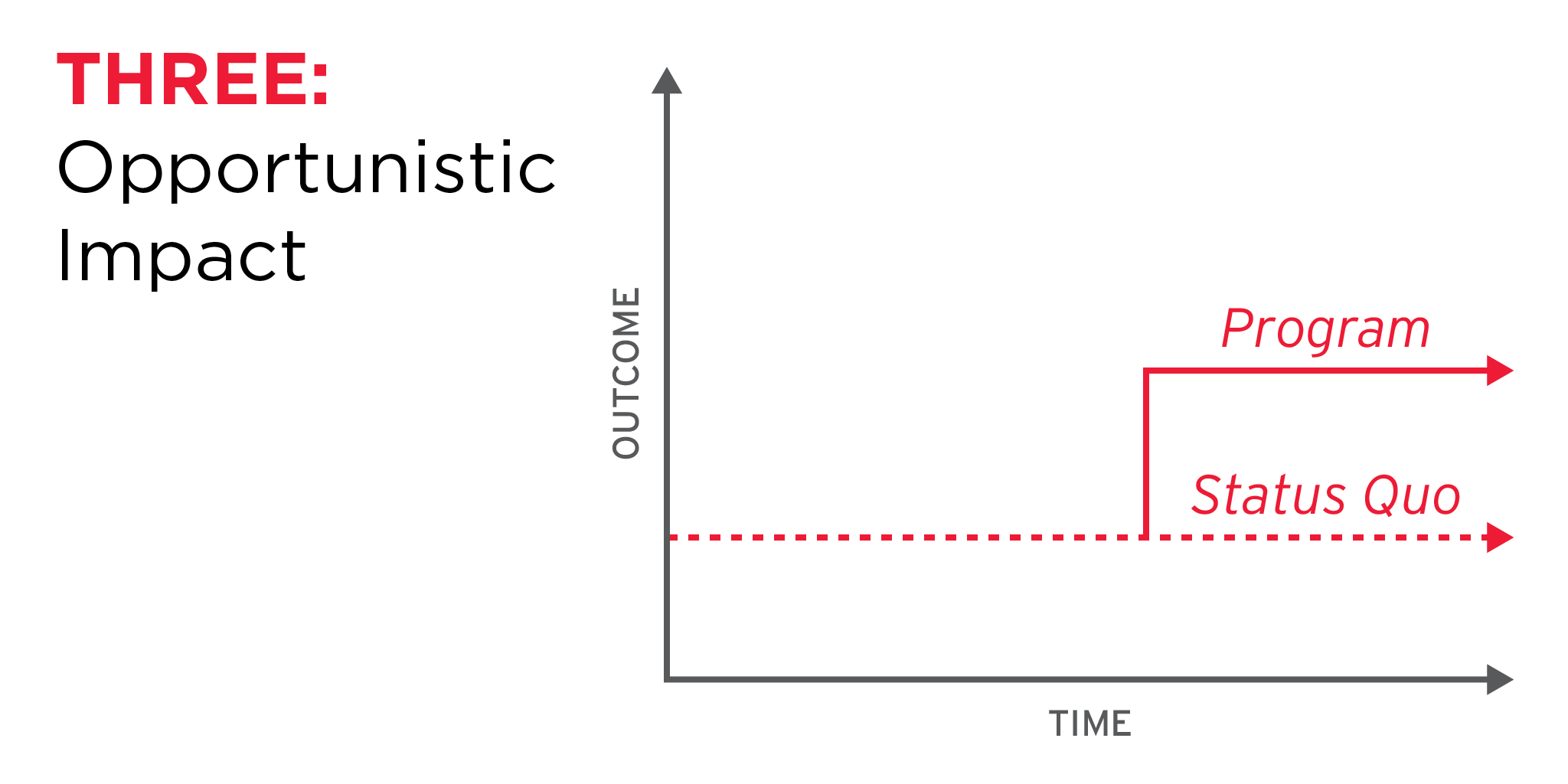

Opportunistic: In the opportunistic model, the program lays the groundwork for change, but the outcomes will be entirely constrained by the context. There may be little perceivable difference in the treatment vs. control scenarios until there is a change in the context that creates an opportunity or removes an obstacle to change. If and when that happens, we expect to see a jump in the value of the outcome in the treatment scenarios compared to the counterfactual. The rate of change therefore looks like a “stair step,” with long periods of stasis interrupted by sudden increases. Public advocacy campaigns often follow an opportunistic model, where ongoing advocacy work lays the groundwork for a trigger event that creates a groundswell of public interest and an opportunity for reform.

Stabilizing: In some situations, we are working to prevent further decline within the system, to disrupt a “vicious cycle,” and/or to hold the system steady until the opportunity arises for positive change. In the stabilizing model, there is no measurable change to the outcome value throughout the course of the program. The program thus appears to have no impact unless you consider the counterfactual and/or the negative historical trendline. We sometimes refer to this as a “finger in the dam” strategy. In this case, the benefit of the program lies in its ability to halt further decline. A civil liberties protection program may follow a stabilizing model: while we may not expect to see substantive expansions of legal protections for marginalized populations, we may be able to maintain the protections that currently exist and ensure their continued enforcement.

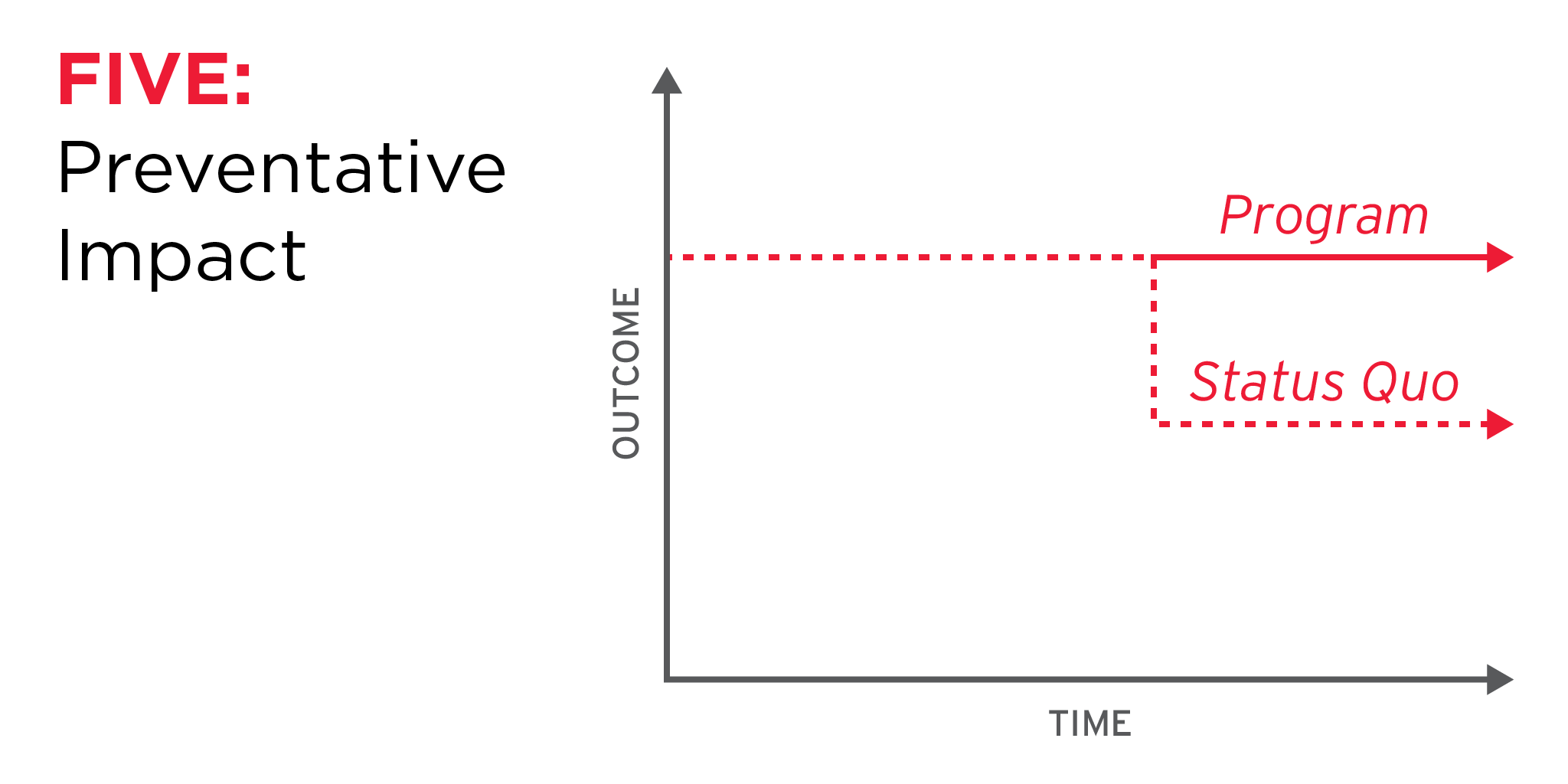

Preventative: Perhaps the opposite of an opportunistic model, in this model the program lays the groundwork to strengthen the status quo and prevent certain events with the goal of having no change in the outcome. In this model, we recognize that there are vulnerabilities in the system that could lead a seemingly healthy system to accelerate suddenly in a negative direction. Crisis communications work, in which a crisis event could lead to sudden negative shift in public perceptions/behaviors, is an example. This model is similar to the “stabilizing” model, in which the impact is “no change,” but differs in that the catalyzing event that spurs the decline may never actually happen. “Proving” impact in the preventative model is particularly challenging, because the impact in this case is, essentially, that a worse-case scenario did not occur.

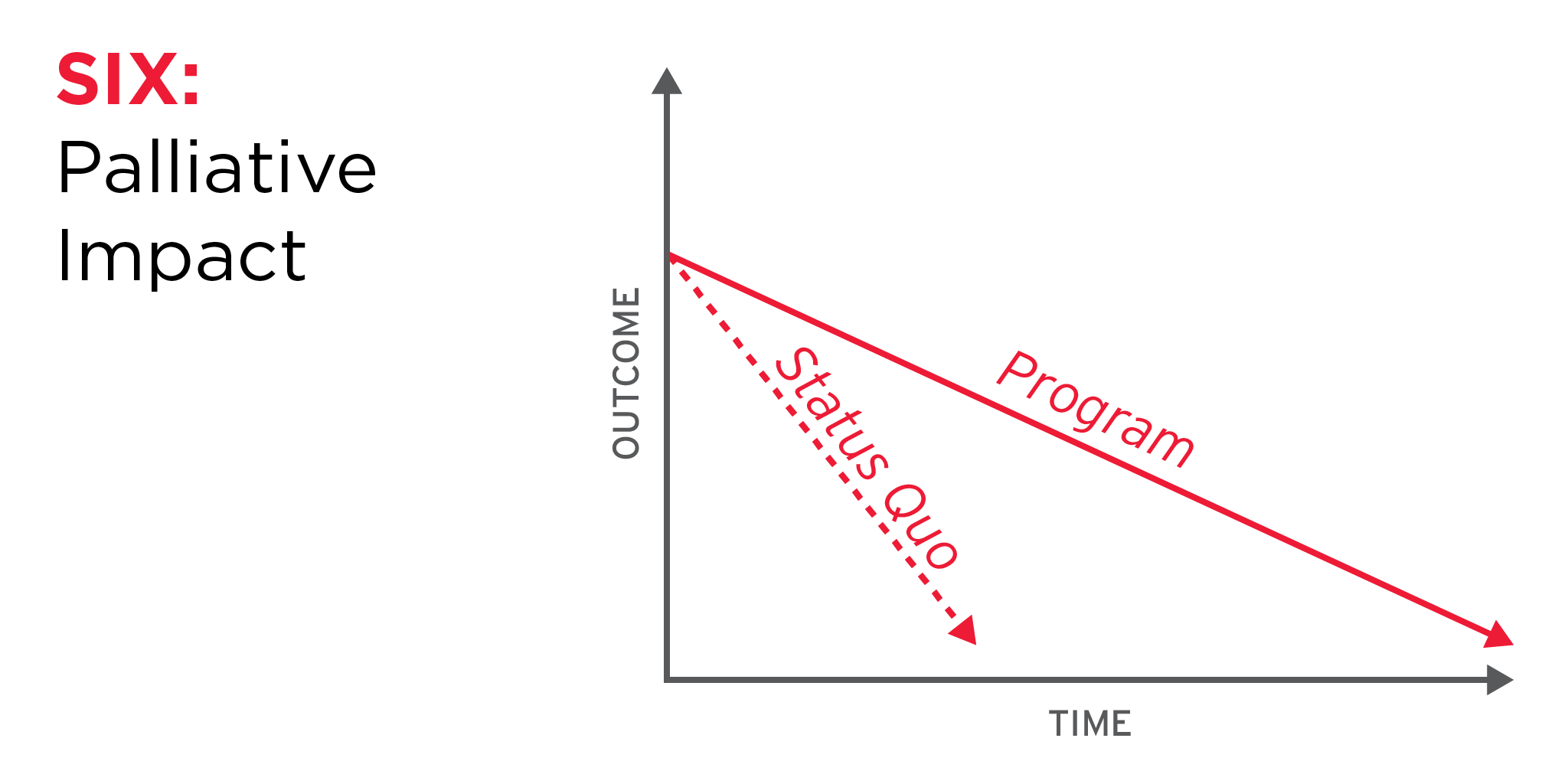

Palliative: One of the realities of working within a systems context is that, occasionally, systems fail with no recourse. The intervention, in these cases, may be focused on slowing the decline of the system in order to mitigate the effects of the eventual collapse, or to buy time for alternatives to emerge or evolve. The palliative model may appear to show a negative relationship between the intervention and the outcomes – that the program is actually doing harm – unless we consider the counterfactual or the historical trendline. An example of the palliative model might be providing direct financial support to a struggling organization or sector until a new, more sustainable business or service model emerges.

Applying Impact Models in an Evaluation and Learning Practice

Of course, in a systems context, it may be hard to actually prove, empirically, whether a system is following one of these models for a number of reasons. But “impact models” can still be an enormously helpful evaluation and learning tool. They can prompt us to analyze not just the current state of the system, but how that system has evolved over time, and thus calibrate our expectations for how the system might respond to an intervention in the future. They can help us communicate a theory of change more clearly, especially what we think the benefit of a program will actually be. They can help us develop strategies of multiple interventions that work together to strengthen a system. They can also help us make sense of performance data, and place outcome measurements in greater context. Finally, they can help us determine how and when to adapt our strategies, as we move from one model to another or add new models to the mix.

I’m sharing these six models not to propose that any and all programs must follow one of them, but rather to start a conversation about the different ways our work can support positive change in complex systems. If these models resonate for you, or if they don’t, or if you have other models you’ve seen in your work, I’d love to hear from you. Feel free to tweet them to me @lizruedy.